Artificial intelligence should be understood not merely as a collection of algorithms, but as a general-purpose technology—comparable in scope and impact to electricity, the internet, or the internal combustion engine. Its defining characteristic is not task specificity, but its capacity to reshape multiple sectors simultaneously through learning, adaptation, and decision-making under uncertainty.

At its core, AI refers to computational systems capable of performing tasks that traditionally require human cognition: perception, reasoning, learning, and action. What distinguishes contemporary AI from earlier symbolic approaches is the shift toward data-driven learning, where systems infer structure, representations, and policies directly from empirical data rather than handcrafted rules.

The modern AI ecosystem rests on three interdependent pillars: data availability, computational scalability, and learning algorithms. The convergence of large datasets, parallel computing architectures (notably GPUs and TPUs), and advances in optimization theory has enabled models to scale from thousands to billions of parameters. This scaling phenomenon has revealed an important empirical insight: capability often emerges not from architectural novelty alone, but from systematic increases in model capacity and training exposure.

From a methodological standpoint, machine learning occupies the center of contemporary AI. Supervised learning dominates applications where labeled data is abundant, such as image recognition and speech transcription. Unsupervised and self-supervised learning, by contrast, address representation learning when labels are scarce or expensive. Reinforcement learning extends the paradigm further by framing intelligence as sequential decision-making, where agents learn policies through interaction with an environment.

However, AI’s significance extends well beyond technical performance. As AI systems are embedded into healthcare, finance, education, transportation, and governance, questions of alignment, accountability, and trust become central. AI systems do not operate in a vacuum; they inherit biases from data, amplify incentives embedded in objective functions, and interact with complex human institutions.

This is where interdisciplinary literacy becomes essential. Understanding AI today requires fluency not only in algorithms, but also in ethics, economics, psychology, and public policy. For example, an AI model optimized solely for accuracy may produce socially unacceptable outcomes if fairness constraints are ignored. Likewise, deployment decisions often matter more than model architecture in determining real-world impact.

AI Scholarium positions itself within this broader intellectual landscape. Rather than treating AI as a black-box productivity tool, the platform emphasizes conceptual clarity, methodological rigor, and cross-domain understanding. Learners are encouraged to interrogate assumptions, understand trade-offs, and situate AI systems within the environments they reshape.

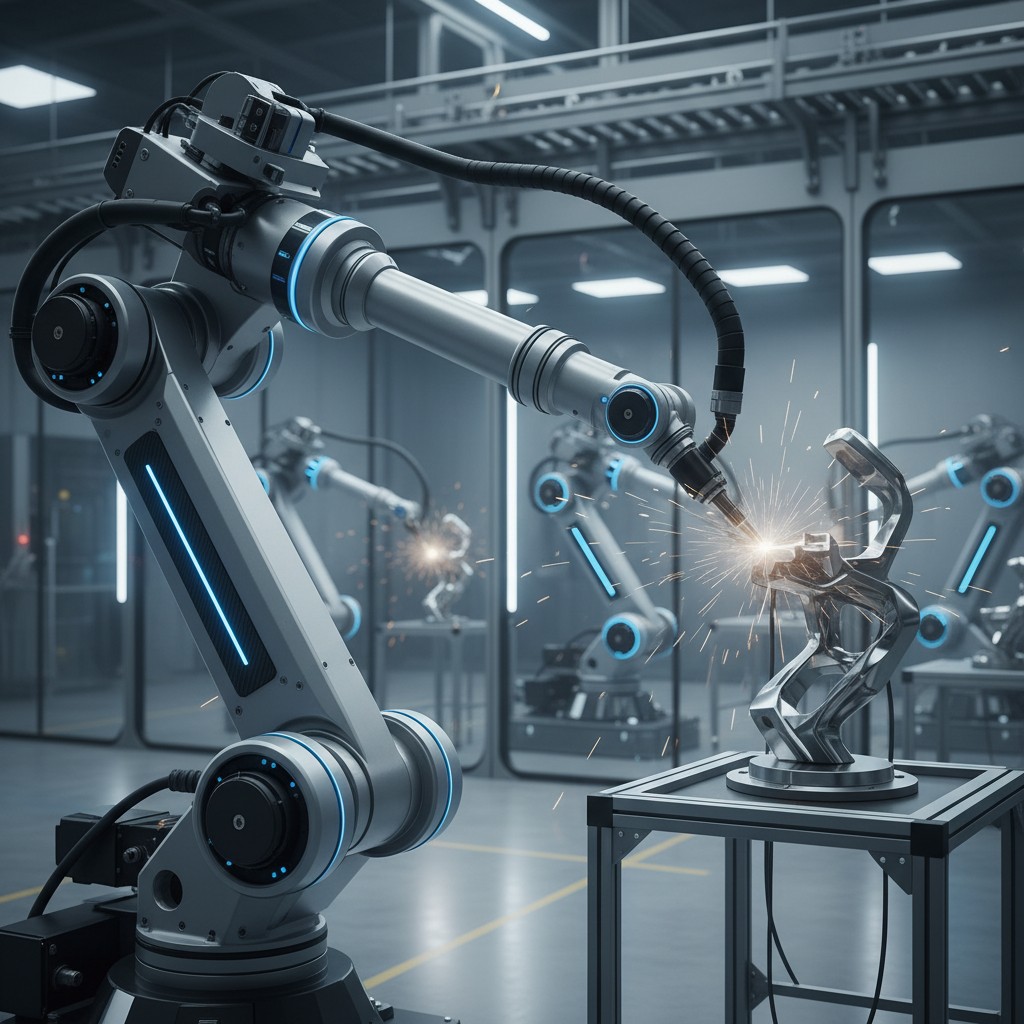

Looking forward, AI’s trajectory suggests deeper integration rather than discrete disruption. Hybrid human-AI systems, domain-specialized models, and embedded intelligence in physical infrastructure will likely define the next phase. Mastery of AI, therefore, is not about chasing tools, but about understanding principles that persist as technologies evolve.